Six Things You could Find out about Deepseek China Ai

페이지 정보

본문

We now have a 3D device mesh with expert parallel shard dimension, ZeRO-three shard dimension, and a replicate dimension for pure information parallelism. Expert parallelism is a type of model parallelism the place we place different consultants on different GPUs for higher performance. We first manually place consultants on totally different GPUs, sometimes sharding throughout a node to make sure we can leverage NVLink for fast GPU communication once we route tokens. With our integration in Composer, we are able to reliably upload checkpoints to cloud storage as incessantly as each 30 minutes and robotically resume from the most recent checkpoint within the occasion of a node failure in lower than 5 minutes. To make sure robustness to failures, we have to checkpoint typically and save and load checkpoints in the most performant way attainable to attenuate downtime. To alleviate this problem, a load balancing loss is launched that encourages even routing to all experts. It's because the gating network only sends tokens to a subset of experts, decreasing the computational load.

We now have a 3D device mesh with expert parallel shard dimension, ZeRO-three shard dimension, and a replicate dimension for pure information parallelism. Expert parallelism is a type of model parallelism the place we place different consultants on different GPUs for higher performance. We first manually place consultants on totally different GPUs, sometimes sharding throughout a node to make sure we can leverage NVLink for fast GPU communication once we route tokens. With our integration in Composer, we are able to reliably upload checkpoints to cloud storage as incessantly as each 30 minutes and robotically resume from the most recent checkpoint within the occasion of a node failure in lower than 5 minutes. To make sure robustness to failures, we have to checkpoint typically and save and load checkpoints in the most performant way attainable to attenuate downtime. To alleviate this problem, a load balancing loss is launched that encourages even routing to all experts. It's because the gating network only sends tokens to a subset of experts, decreasing the computational load.

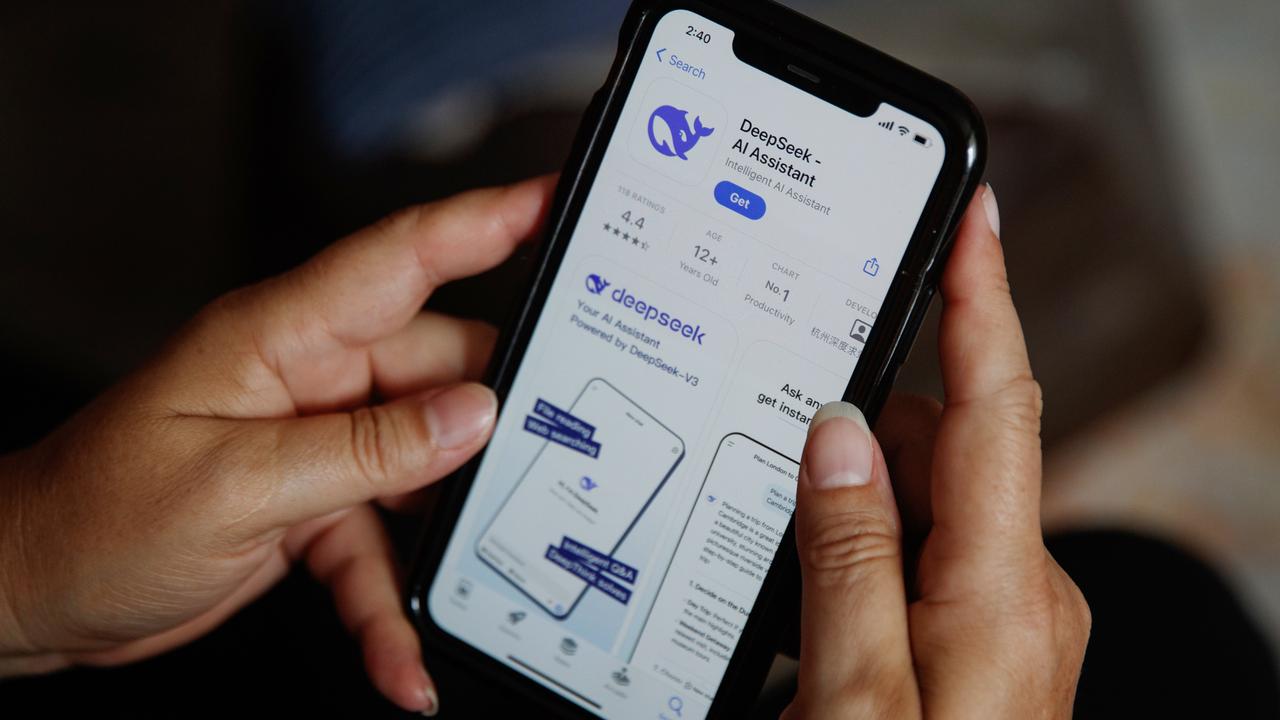

GPUs, network bandwidth shortly turns into a bottleneck. By parallelizing checkpointing throughout GPUs, we are able to unfold out community load, enhancing robustness and pace. As we scale to 1000's of GPUs, the price of communication throughout units increases, slowing down coaching. This approach permits us to steadiness reminiscence efficiency and communication price throughout large scale distributed training. Additionally, when coaching very massive fashions, the size of checkpoints may be very massive, leading to very slow checkpoint add and download occasions. Founded in 2023 by a hedge fund manager, Liang Wenfeng, the corporate is headquartered in Hangzhou, China, and focuses on creating open-source large language models. ?️ Oct 19, 2023 - Honored to be awarded the Baosteel Outstanding Student Award 2023 ? as the one undergrad scholar amongst science and know-how departments in RUC! Dey, Nolan (March 28, 2023). "Cerebras-GPT: A Family of Open, Compute-efficient, Large Language Models". We’ve built-in MegaBlocks into LLM Foundry to enable scaling MoE training to 1000's of GPUs.

A better number of consultants permits scaling up to larger fashions with out increasing computational value. When utilizing a MoE in LLMs, the dense feed forward layer is replaced by a MoE layer which consists of a gating network and a lot of consultants (Figure 1, Subfigure D). On this weblog submit, we’ll speak about how we scale to over three thousand GPUs utilizing PyTorch Distributed and MegaBlocks, an environment friendly open-supply MoE implementation in PyTorch. The variety of experts and how specialists are chosen depends on the implementation of the gating network, however a common methodology is top k. The number of experts and choosing the top okay consultants is a vital consider designing MoEs. During inference, nonetheless, a better prime k generally leads to slower inference pace. Which means that the model has a better capability for learning, however, previous a certain level the performance good points are likely to diminish.

If you are you looking for more info in regards to Deepseek AI Online chat stop by our web site.

- 이전글레비트라 구입처 시알리스 처방받기 25.03.07

- 다음글Online academic writing workshop 25.03.07

댓글목록

등록된 댓글이 없습니다.